UPD, Nov 2016: "Far" below is from older setup, when it was used as Far light. Now it is used upside-down as High-beam light.

The camera software is closed, proprietary, and "enhances" the image

in unknown way.

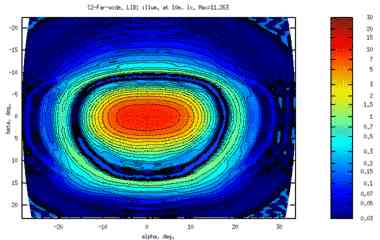

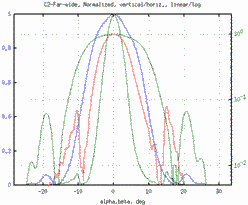

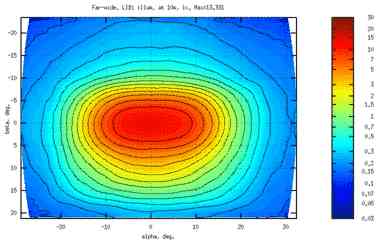

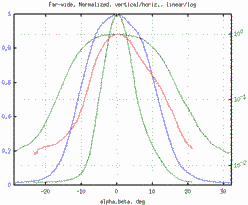

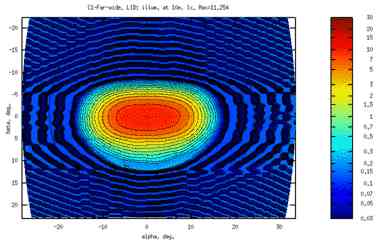

Here's a visualization (Far-wide) from two (two = high dynamic range)

camera images.

|

|

|

|

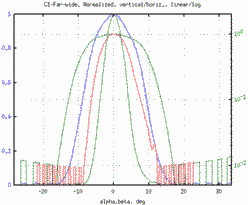

Clear enough, without comments.

Also note the unfaithful shape of the brights curve. And the over-all shrinking. (The camera also cheats something with resolution. Taking it into account would shrink the curves down by ~4% more.)

Of course, identical sensor data is used in both cases, and the visualizing script is same. The only difference is in how the script input images were obtained:

# camera: de-gamma = make input data linear: OPTS="-type grayscale -background black -rotate -3 -quality 50 \ -gamma 0.45455" convert $OPTS P1010323.JPG camera_far_d_a.jpg convert $OPTS P1010327.JPG camera_far_d_c.jpg # raw (this is a copy from image_proc_commands.txt for your convenience): OPTS="--temperature=4058 --green=0.851 --exp=0 --out-type=jpeg \ --compression=50 --gamma=1 --grayscale=lightness" ufraw-batch $OPTS --rotate=-3 --output=far_d_a.jpg P1010323.RW2 ufraw-batch $OPTS --rotate=-3 --output=far_d_c.jpg P1010327.RW2

The de-gamma process for camera is not exact - fixed power instead of detailed gamma (with linear part).. But I think it's gonna be about that bad anyway.

Alternative, photographic point of view.

Below are two crops, from same shot. Left - from camera, right - from raw.

How these 2 images were obtained:

ufraw-batch --exp=2 --crop-left=1650 --crop-right=2070 --crop-top=205 \ --crop-bottom=655 --wb=camera --out-type=jpeg --compression=70 \ --output=_tmp_sim-com-raw.jpg P1010274.RW2 # adjust levels in linear scale for clarity: 25%=2EV convert -crop "420x450+1650+194" -gamma 0.45455 -level 0,25% -gamma 2.2 \ -quality 50 P1010274.JPG _tmp_sim-com-cam.jpg # thumbs are not visually different from originals convert -thumbnail 420 _tmp_sim-com-cam.jpg sim-com-cam.jpg convert -thumbnail 420 _tmp_sim-com-raw.jpg sim-com-raw.jpg

Few observations what camera did:

However, for general picture-taking, by someone not skilled at photo-editing (like me), camera gives great looks - with none of your time spent.

Conclusion: Never ever use camera-software images for measurement-related purposes! :) Even though they look better.

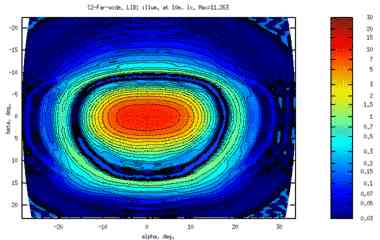

Here is a visualization using only 1 source jpg image (from raw of course).

To be compared with the good HDR image from the previous section.

|

|

The black is a tight mesh of isolines around noise. (again, identical sensor data were used..)

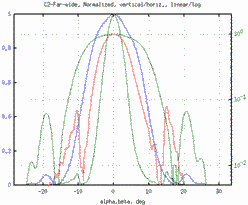

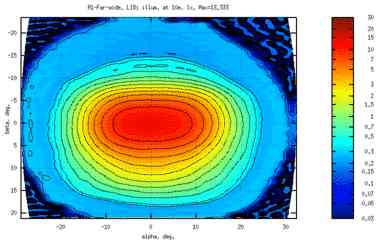

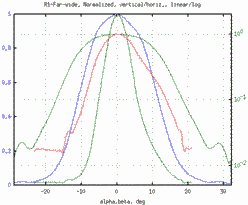

And here's what naive shooting — 1 source jpg file (not 2 or 3) from camera's blackbox (not from raw) — would result in:

|

|